Designed To Deceive

How Dark Patterns Exploit Children's Developing Minds

Your eight-year-old asks to spend £4.99 on "gems" in their favourite game. The request seems reasonable—it's their pocket money, after all. What you don't see is the deliberate design architecture that led to this moment: the countdown timer creating artificial urgency, the virtual currency obscuring real costs, the social pressure from other players' purchases, and the reward mechanism calibrated to trigger one more ask, then another.

This is far from accidental. It's engineered manipulation, and it has a name: dark patterns.

What Makes a Pattern "Dark"

Dark patterns are interface design choices that benefit the company at the user's expense. Originally coined by designer Harry Brignull in 2010 to describe e-commerce tricks, the concept has evolved as researchers document how these techniques permeate children's digital experiences—from educational apps used in classrooms to the gaming platforms where they spend hours unsupervised.

Unlike straightforward deception, dark patterns exploit the gap between how interfaces appear and how they actually function. A 2024 study analysing apps used by preschoolers aged 3-5 found manipulative design features in 95% of free apps, compared to 80% of paid apps.

The difference isn't about honesty versus dishonesty—it's about understanding the sophisticated psychological mechanisms these designs trigger, particularly in developing brains.

Consider the temporal dark pattern called "playing by appointment." Games require players to return at specific times to collect rewards or complete tasks. Miss the window, lose progress. For adults, this might be annoying. For children still developing executive function—the cognitive ability to plan, organise, and resist impulses—it creates a compulsion loop their brains aren't equipped to manage.

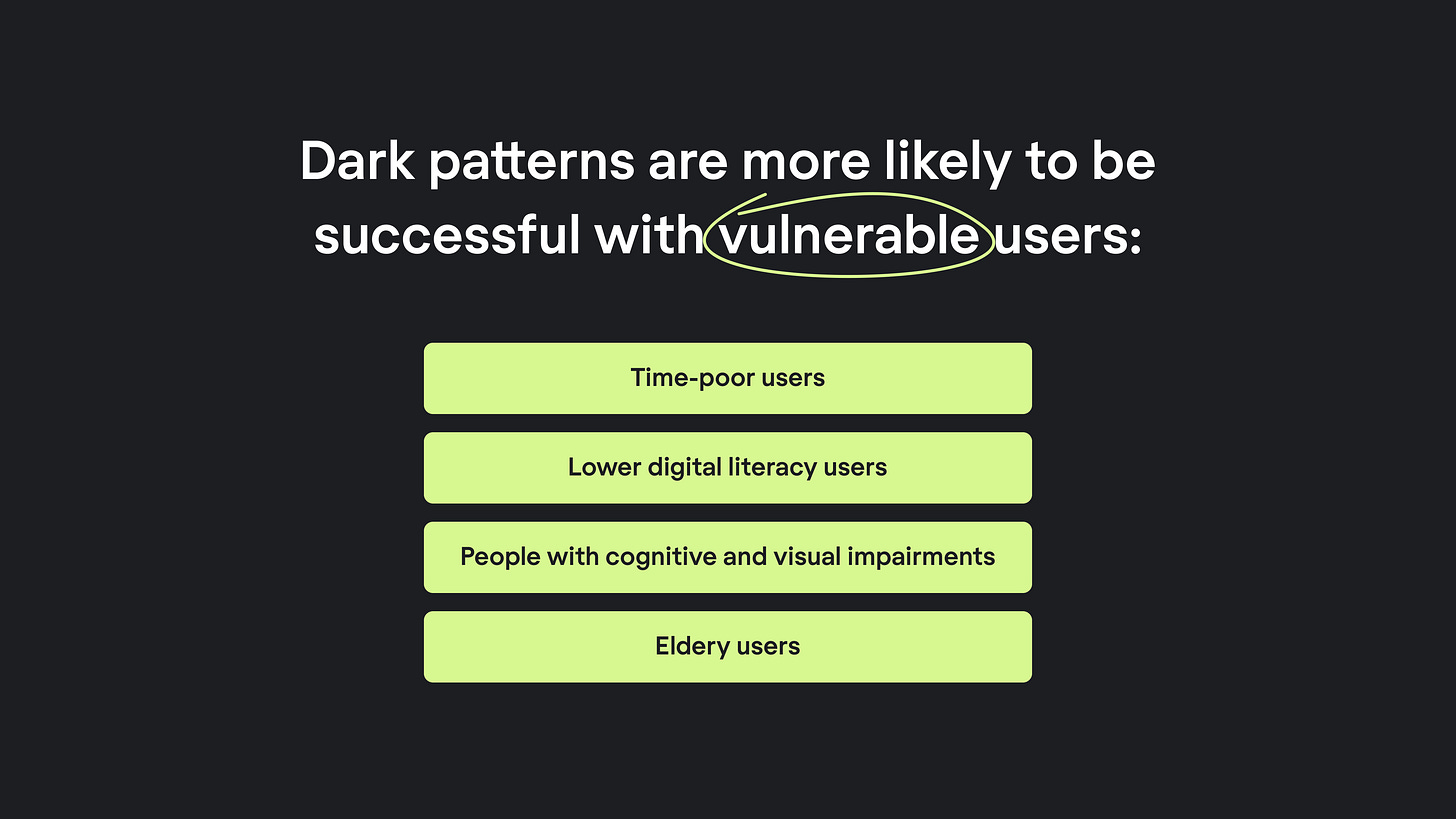

Why Children Are Structurally Vulnerable

A German study published in 2024 tested 66 fifth-graders (ages 10-11) on their ability to recognise dark patterns. When explicitly asked to search for manipulations, about half spotted overly complex wordings and colour-based tricks. Only 1 in 4 identified manipulative formulations designed to steer decisions.

The children understood they were being influenced. They described feeling "tricked" even when they couldn't articulate exactly how. Yet understanding manipulation doesn't equal resistance—especially when design exploits developmental vulnerabilities that exist regardless of awareness.

Dr. Jenny Radesky, a pediatrician studying manipulative design in children's media, identifies specific cognitive limitations that dark patterns target:

Immature executive functions. Children are still developing self-control and decision-making capabilities. They struggle to resist visual triggers adults might dismiss: sparkles, countdown clocks, cascading rewards. The prefrontal cortex—responsible for evaluating consequences and inhibiting impulses—doesn't fully mature until the mid-20s.

Reward susceptibility. Children's behaviour is powerfully shaped by positive reinforcement. Dark patterns exploit this through variable reward schedules (the same mechanism behind slot machines), streak systems that punish missed days, and achievement frameworks that feel like progress but primarily serve engagement metrics.

Concrete versus abstract thinking. Children struggle to grasp the abstract scale of algorithms and data harvesting. They're cautious about stranger danger but don't transfer that caution to algorithms curating their feeds or companies profiling their preferences. Virtual currencies compound this—"gems" and "coins" feel infinite, disconnected from the finite reality of money.

Parasocial relationships. When children bond with game characters or app mascots, they're more likely to comply with requests from these "friends." Research on apps targeting young children found mascot characters instructing users to make purchases, exploiting children's trust in familiar figures.

This is about neural architecture. A Scottish study examining 11-12 year olds' mental models of online deception found children constructed reasonable explanations for why they might be manipulated ("showing off," "causing mischief," "stealing data") but lacked frameworks for understanding systematic design manipulation by profit-seeking corporations.

Dark Patterns in Apps Parents Trust

The mechanics aren't abstract. They're operating right now in apps parents consider educational or harmless.

Duolingo: When Learning Becomes Guilt

Duolingo markets itself as a friendly language-learning tool. Parents see an owl mascot and bite-sized lessons—what could be harmful?

Behind the cheerful interface sits sophisticated behavioural manipulation. The app's streak system creates emotional investment: complete a lesson daily and your streak number climbs. Miss a day and it resets to zero. Research shows users are three times more likely to return daily when streaks are active.

The owl mascot has been deliberately redesigned over years to widen its facial expressions and maximise emotional impact. Notifications escalate: "Duo misses you!" gives way to images of a crying owl, then "You're about to lose your streak!" According to Duolingo, these "guilt trips" are 5-8% more effective at re-engagement than other methods.

Children don't recognise this as manipulation. They experience it as letting down a friend. When a cartoon owl appears sad, their developing brains respond emotionally before critically. The fact that they know the owl isn't real doesn't matter—the response operates below conscious reasoning.

The monetisation completes the pattern: users can pay to "repair" broken streaks or purchase "streak freezes." The free educational app becomes a system where children may pay money to avoid feeling guilty about missing arbitrary daily targets.

Roblox: Virtual Currency and Real Money

Roblox presents itself as a creative platform where children build and play games. What parents often miss: it's engineered around Robux, a virtual currency that obscures real costs.

A child wanting an in-game item sees it costs 400 Robux. They don't see "£4." The psychological distance matters—research on children's apps shows virtual currencies are among the most effective ways to drive spending because young users don't connect digital tokens to limited family money.

The platform layers additional pressure: games showcase what other players have purchased, creating social comparison. Limited-time items create artificial urgency. "Loot boxes" offer randomized rewards—the same variable reward schedule that makes slot machines addictive, now marketed to children.

Roblox faced scrutiny similar to Epic Games' Fortnite settlement, with regulators examining whether the platform uses predatory tactics to manipulate young people into spending. The FTC noted these designs effectively introduce children to gambling psychology while marketing the platform as child-appropriate creative play.

YouTube: The Autoplay Trap

YouTube's autoplay feature seems neutral—just loading the next video. For children, it's a designed path away from intentional viewing toward algorithmic control.

A child searches for a specific video. They watch it. Autoplay begins. The algorithm selects what comes next, optimised not for educational value or age-appropriateness but for watch time. Each subsequent video is chosen to keep the child watching longer.

Parents set a "one video" limit. The child agrees. But one video becomes ten because autoplay bypassed the agreement's premise—the child didn't choose to watch more; the system chose for them.

Autoplay is a core engagement mechanism. Disabling it requires navigating settings most children can't access and many parents don't know exist. The default is always "on."

The Pattern Across Platforms

These examples share common elements:

Exploiting incomplete development. Children's impulse control, future planning, and abstract reasoning are still forming. Designs target exactly these vulnerabilities.

Obscuring costs. Virtual currencies, "gems," "coins," and "tokens" disconnect spending from money's actual value and scarcity.

Manufacturing urgency. Countdown timers, limited offers, and "don't lose your streak" notifications create pressure incompatible with thoughtful decision-making.

Leveraging social pressure. Showing what friends bought, creating leaderboards, displaying others' progress—all exploit children's heightened sensitivity to peer comparison.

Making opt-out difficult. Default settings favor the company. Changing them requires navigation skills, knowledge that alternatives exist, and often parental access children lack.

The sophistication matters. These aren't accidental design choices. Duolingo A/B tests guilt messages. YouTube optimises autoplay algorithms. Roblox calibrates virtual currency pricing. Each decision reflects deliberate strategy backed by user data showing what works to maximise engagement and spending.

Parents often discover these patterns only after consequences emerge: unexpected charges, compulsive use, emotional distress when streaks break. By then, behavioural hooks are established and children genuinely feel they need what the app trained them to want.

The Gaming Industry's Laboratory

Gaming platforms function as testbeds for dark pattern refinement. Epic Games' $520 million settlement with the FTC in 2022 revealed the extent of this experimentation:

Fortnite made purchases intentionally confusing, enabled charges without consent, and locked accounts when parents disputed unauthorised transactions.

The FTC's investigation documented what researchers call "grinding"—making free versions so tedious that players feel forced to purchase time-saving options. A 2024 analysis of 1,496 mobile games found dark patterns weren't limited to obviously predatory titles. They appeared across games typically perceived as benign, suggesting these techniques have become industry standard rather than aberration.

Current lawsuits extend beyond Epic. The Gwinn case, filed in California in September 2024, challenges mobile gaming apps designed for young children specifically. The allegations detail sophisticated monetisation techniques: loot boxes with undisclosed odds, premium currencies hiding actual costs, artificial scarcity driving urgency, and interface designs that make accidental purchases easy while refunds are difficult.

Research on Fortnite's impact on children aged 8-12 documented behavioural changes: mood alterations, reduced outdoor activity, social isolation, and in some cases, children stealing money to fund in-game purchases. Parents described not recognising their children's compulsive patterns until significant damage occurred—precisely because the manipulation was invisible, operating at the interface level rather than through overt coercion.

The Systematic Nature of the Problem

What emerges from regulatory documents and academic research isn't a story of a few bad actors but of systematic incentive structures. Platforms operate in competitive attention economies where engagement metrics determine advertising revenue and user retention drives valuations. Dark patterns aren't aberrations; they're optimisations.

A November 2024 systematic review of dark pattern scholarship identified a fundamental regulatory challenge: the "elusive nature" of dark patterns makes enforcement difficult. They're context-dependent, continuously evolving, and often operate through combinations of techniques rather than single identifiable tricks. What looks like a helpful recommendation might be an algorithm trained on thousands of A/B tests to maximise time-on-site rather than user benefit.

The research argues for a paradigm shift toward "diligent design"—proactive frameworks requiring companies to demonstrate consideration of user wellbeing in design processes, rather than reactive enforcement after harm occurs. This mirrors broader debates about whether tech regulation should focus on outputs (harmful content) or inputs (the business models and design choices that systematically generate harm).

For children specifically, the challenge compounds. Age verification systems raise privacy concerns and data breach risks. Blanket restrictions on access can harm children who benefit from platforms—Instagram makes 20% of teens feel worse but 40% feel better, according to one frequently-cited report. One-size-fits-all approaches ignore developmental diversity and legitimate uses of technology for connection, learning, and creative expression.

Where This Leaves Parents

The regulatory landscape is shifting, but slowly. Enforcement is inconsistent. And in the meantime, children are navigating interfaces deliberately designed to exploit their developmental vulnerabilities.

The most honest thing we can say is that individual parental action cannot fully compensate for systemic design manipulation. No amount of online rules addresses temporal dark patterns. Media literacy education helps but doesn't neutralise sophisticated variable reward schedules. Technical controls like app limits work until they don't—when social pressure or compulsion loops override rational decision-making.

What remains within parental control is changing the conversation. Instead of framing this as children lacking discipline or parents failing to supervise, we can recognise we're asking families to defend against billion-dollar companies employing teams of behavioural psychologists and UX designers specifically to bypass conscious decision-making.

Specific actions that align with this understanding

Interrogate "free" offerings. Apps and games that cost nothing are monetizes somehow. Before allowing downloads, investigate what the business model actually is. If it's in-app purchases, expect dark patterns optimised to drive those transactions.

Discuss mechanisms openly. When children describe games or apps, ask questions that reveal the underlying design: "How does the game decide what to show you next?" "What happens if you don't play for a day?" "Why do you think they made it work that way?" These conversations build metacognitive awareness—understanding that interfaces are designed with purposes that may not align with user interests.

Recognise the scope problem. Platforms introduce new features constantly. Dark patterns evolve faster than parental monitoring can track. This isn't a failure of vigilance; it's a structural mismatch between family resources and corporate capabilities.

Normalise opting out. Not every app needs to be on the device. Not every game requires participation. Children benefit from understanding that saying no to manipulative design is legitimate, not antisocial or fearful.

The Broader Stakes

Dark patterns matter beyond individual purchases or gaming addiction. They represent a fundamental question about the terms under which children grow up digital: Will interfaces respect their developing autonomy, or systematically undermine it?

When children learn their preferences can be manufactured through reward loops, that urgency can be artificially created, that their attention is a resource to be harvested—these aren't just technology lessons. They're lessons about power, agency, and what institutions consider acceptable in pursuit of profit.

The 2024 research showing 10-year-olds can spot some manipulations but not others suggests children are developing critical awareness in real time. But awareness without structural change simply shifts the burden: children must become experts in defending against manipulation while companies become experts in deploying it.

Regulatory action matters precisely because it reframes the question. Rather than asking how children can resist dark patterns, we can ask why these patterns should exist at all when the users are developing minds. Rather than celebrating children who successfully navigate manipulative interfaces, we can question why we've normalised environments requiring this navigation.

The research is clear on children's vulnerability. The documentation of dark patterns is extensive. The corporate resistance to regulation is predictable. What remains uncertain is whether corporations and governments will treat children's cognitive development as a space deserving protection from commercial manipulation, or as simply another market to be optimised.

Further Reading:

Ofcom's Online Safety Industry Bulletins (monthly updates on UK enforcement)

BEUC's 2025 report: "Children's Protection Online in the EU"

Academic study: "Growing Up With Dark Patterns" (2024, ACM)

FTC's Report on Dark Patterns (2022)

https://www.eleken.co/blog-posts/dark-patterns-examples

Thank you Tatjana for another rigorously researched and fascinating, if alarming post. I also really appreciate how you highlight dialogue at home as the most effective line of defence against these dark arts.